Data Labeling: The Authoritative Guide

The authoritative guide to data labeling for machine learning. Learn about image, video, and text annotation, best practices, and how to ensure high-quality labels.

Data Labeling for Machine Learning

Machine learning has revolutionized our approach to solving problems in computer vision and natural language processing. Powered by enormous amounts of data, machine learning algorithms are incredibly good at learning and detecting patterns in data and making useful predictions, all without being explicitly programmed to do so.

Trained on large amounts of image data, computer models can predict objects with very high accuracy. They can recognize faces, cars, and fruit, all without requiring a human to write software programs explicitly dictating how to identify them.

Similarly, natural language processing models power modern voice assistants and chatbots we interact with daily. Trained on enormous amounts of audio and text data, these models can recognize speech, understand the context of written content, and translate between different languages.

Instead of engineers attempting to hand-code these capabilities into software, machine learning engineers program these models with a large amount of relevant, clean data. Data needs to be labeled to help models make these valuable predictions. Data labeling is one of machine learning's most critical and overlooked activities.

This guide aims to provide a comprehensive reference for data labeling and to share practical best practices derived from Scale's extensive experience in addressing the most significant problems in data labeling.

What is Data Labeling?

Data labeling is the activity of assigning context or meaning to data so that machine learning algorithms can learn from the labels to achieve the desired result.

To better understand data labeling, we will first review the types of machine learning and the different types of data to be labeled. Machine learning has three broad categories: supervised, unsupervised, and reinforcement learning. We will go into more detail about each type of machine learning in Why is Data Annotation Important?

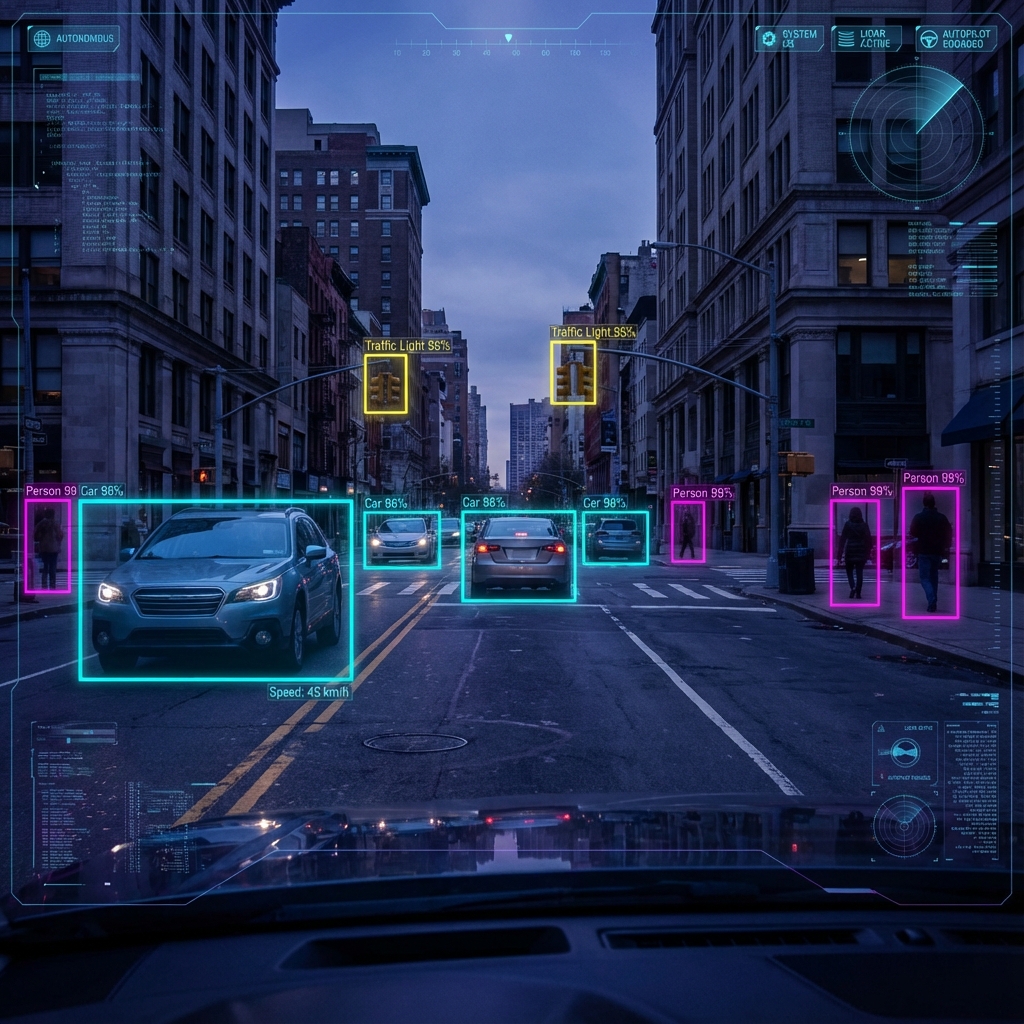

Supervised machine learning algorithms leverage large amounts of labeled data to "train" neural networks or models to recognize patterns in the data that are useful for a given application. Data labelers define ground truth annotations to data, and machine learning engineers feed that data into a machine learning algorithm. For example, data labelers will label all cars in a given scene for an autonomous vehicle object recognition model. The machine learning model will then learn to identify patterns across the labeled dataset. These models then make predictions on never before seen data.

Types of Data

Structured vs. Unstructured Data

Structured data is highly organized, such as information in a relational database (RDBMS) or spreadsheet. Customer information, phone numbers, social security numbers, revenue, serial numbers, and product descriptions are structured data.

Unstructured data is data that is not structured via predefined schemas and includes things like images, videos, LiDAR, Radar, some text data, and audio data.

Images

Camera sensors output data initially in raw format and then converted to .png or preferably .jpg files, which are compressed and take up less storage than .png, which is a serious consideration when dealing with the large amounts of data needed to train machine learning models. Image data is also scraped from the internet or collected by 3rd party services. Image data powers many applications, from face recognition to manufacturing defect detection to diagnostic imaging.

Videos

Video data also come from camera sensors in raw format and consist of a series of frames stored as .mp4, .mov, or other video file formats. MP4 is a standard in machine learning applications due to its smaller file size, similar to .jpg for image data. Video data enables applications like autonomous vehicles and fitness apps.

Task Audit

Why is Data Annotation Important?

"Garbage in, garbage out." — The fundamental axiom of Machine Learning.

Data is the fuel for modern AI. Without high-quality labeled data, even the most sophisticated algorithms will fail to perform. To ensure your models are robust, accurate, and unbiased, you must prioritize data quality from the very beginning of your ML pipeline.

Accurate annotations provide the ground truth that models compare their predictions against during training. Errors in this ground truth propagate directly into model errors, often magnified. In safety-critical applications like autonomous driving or medical diagnosis, the margin for error is effectively zero, necessitating rigorous quality assurance.

How to Annotate Data

In-House Teams

Highest quality control, but expensive and hard to scale. Best for sensitive data.

Crowdsourcing

Scalable and cost-effective, but requires robust QA mechanisms to filter noise.

Choosing the right annotation workforce depends on your data volume, complexity, and security requirements. Modern approaches also utilize Synthetic Data—data generated programmatically—to bootstrap models in data-scarce environments.

High-Quality Data Annotations

Achieving high-quality annotations is an iterative process. It involves creating a "Gold Set" of perfectly labeled data to benchmark annotators against.

- Develop clear, unambiguous labeling instructions

- Implement consensus voting (multiple annotators per item)

- Regularly audit annotations against the Gold Set

- Provide continuous feedback to annotation teams

Data Labeling for Computer Vision

Computer vision tasks rely on precise spatial annotations. From 2D bounding boxes for object detection to pixel-perfect semantic segmentation for scene understanding, the granularity of labeling dictates the model's capabilities.

NLP Data Labeling

In Natural Language Processing, context is king. Labeling involves Named Entity Recognition (NER) to identify people, places, and organizations, as well as Sentiment Analysis to gauge emotional tone.

Modern LLMs require even more complex annotation, such as "Reinforcement Learning from Human Feedback" (RLHF), where humans rank model outputs to align them with human, helpful, and harmless intent.

Conclusion

Data labeling is not just a utility; it is a strategic asset. By investing in high-quality data pipelines, you build a moat around your AI products. As models become commoditized, your proprietary, high-quality labeled data becomes your most valuable differentiator.